The Real AI Revolution Is Affecting Workflows

The Rise of Fordism for Intellectual Services

The Real AI Revolution Is Organizational, Not Technological

There is a tempting but misleading narrative about artificial intelligence: that progress depends on building ever more powerful foundation models. My opinion is that the most consequential transformation underway is not happening in research labs — it is happening inside organizations, in the unglamorous work of redesigning how intellectual tasks are structured, sequenced, and executed (Jesuthasan 2025).

The deeper analogy here is historical. The first Industrial Revolution was not fundamentally about the steam engine. It was about changing the workflows up to giving birth to Fordism — the systematic decomposition of physical labor into discrete, repeatable, auditable steps. What we are witnessing today is the emergence of an equivalent logic for cognitive work: a Fordism of intellectual services.

Following this analogy, let’s try a prediction on the way AI will reshape working habits. The thesis is that AI is starting a new Fordist revolution for intellectual services and workflows.

The Human as Organizational Glue

For decades, organizations or departments of companies operating intellectual services could afford imprecision. Processes were loosely defined because the human mind is remarkably plastic — capable of navigating ambiguity, filling in gaps, and compensating for structural incoherence. Workers, knowingly or not, acted as the connective tissue between poorly articulated tasks (Mann 2026).

Implicitly or explicitly, intellectual workflows evolved to a form of optimality. An optimal workflow is distributing intellectual tasks among humans a way that nobody is waiting someone else to finish a task. This is the essence of Agile Process Management: share information about who is about to wait for what, enabling on-the-fly flexible reconfiguration by humans (Adeyemi Bode, Ragab, et al. 2024). The workflow does not need to be perfectly specified if the level of information sharing is good enough.

The Productivity Paradox

A persistent observation in applied settings is that AI adoption rarely delivers the productivity gains organizations anticipate (Singla et al. 2025). The angle taken in this post suggests this is not primarily a model quality problem. It is a workflow integration problem.

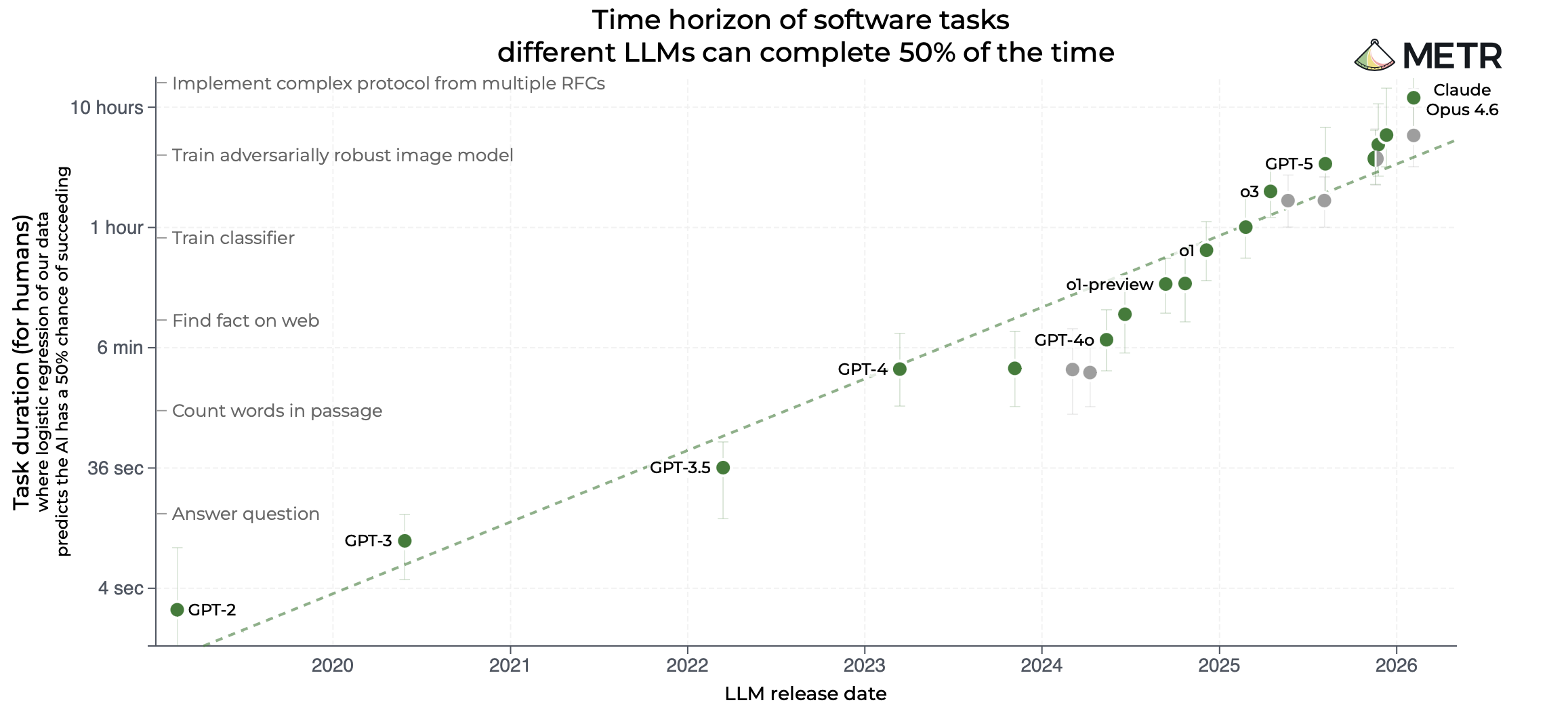

If one introduces AI in an existing (hence at least vaguely optimal) workflow, some tasks will be done faster (see Figure 1), and (except if the whole workflow is autonomous, i.e. without any external inputs, and fully replaced by AI agents) as a result humans operating the other tasks will simply wait more: changing the execution times requires to rething the workflow, since it is no more optimal under changes in execution speeds.

When a handful of steps in a process are accelerated dramatically — say, a report that previously took a day now takes minutes — that gain only propagates if the downstream steps are designed to absorb the new throughput. In most organizations, they are not. The bottleneck shifts, the queue rebuilds elsewhere, and the system-level productivity improvement is negligible. This is the AI Workflow Integration Paradox: local efficiency becoming a negative externality for the broader process.

Seen this way, the introduction of Agentic AI in an organization is a diagnostic tool. Deploying agentic systems forces organizations to confront the latent dysfunction in their own workflows. The challenge is that this analysis has to be performed while the workflows have to be redesigned to cope with local accelerations AI agents can provide.

From Point Solutions to Production Lines

If you zoom out this picture at the level of the collection of services a company is operating, this issue of bad synchronization of automated tasks inside the end-to-end workflows persists. Most organizations currently approach AI as a collection of isolated interventions: a chatbot for customer service, a summarization tool for legal teams, a voice agent for scheduling. The result is a fragmented archipelago of local improvements that rarely compound into systemic gains (Xia et al. 2024).

The industrial logic points in a different direction. Rather than grafting AI onto existing roles, the productive move is to treat intellectual work as a production line — decomposing it into atomic components that can be individually automated, monitored, and recombined. In this architecture, low-cost specialized components handle narrow, well-defined subtasks, feeding outputs into a central orchestration layer that manages the end-to-end workflow (Loaiza and Rigobon 2024).

Unlike some blog posts may suggest (Xia et al. 2024), the role of human experts does not disappear in this model; it shifts. Just as the Fordist revolution did not eliminate skilled labor but redefined where skill was applied, agentic systems move expert value upstream — toward strategic oversight, exception handling, and quality validation, rather than the manual execution of routine cognitive tasks.

Like The Tramp (a.k.a. Charlot) in Modern Times (see upper Photo), employees operating intellectual tasks may feel disturbed by the explicit boxing of their job description in a well defined list, and like him again, their current pace of operating tasks will be challenged by the upstream and downstream AI agents working tirelessly. Like in industrial production lines, I would not be surprised if suggestion boxes appear in modern organizations to allow humans experimenting the day to day work surrounded by AI agents to propose local improvements that would otherwise be under the radar of a emergent category of construction foremen.

Evaluation-Driven Development: Count to Understand, Understand to Act

During this revolution, the organisation will need measurement tools. This is an old saying in automation: “count to understand, understand to act”; the collection of intellectual tasks performed by a team (or a collective of teams) will have to be measured. This is an important step because measuring requires to really understand the added value of each task in the whole workflow; how could one automate a process, introducing AI agents in it, and hope a Return on Investment, without this step?

One cannot redesign what one cannot observe. This points toward what might be called Evaluation-Driven Development (EDD) as a core design principle for the industrialization of intellectual services (Xia et al. 2024). EDD inherits from the long evolution of Test-Driven Development (TDD) in software development; it relies on the observation that specifying a task is very close to writing a test to check it will work properly, and hence implementing this test before starting the implementation of a feature is unlocking a lot of difficulties. It clarifies what has to be done, and provides an operative “definition of done” that is executable.

Mathematically speaking, TDD relies on the power of the Curry-Howard correspondance showing the equivalence between a proof and a computer program. The consequence is that, like a proof is equivalent to the statement of the theorem it proofs, a computer program is equivalent to the collection of tests it solves.

Evaluation-Driven Development is the equivalent for the non-deterministic systems that are AI Agents, it treats evaluation not as a retrospective diagnostic but as a structural component of system design, operating at two levels:

- Offline evaluation — conducted before deployment — functions analogously to the precision tooling phase in manufacturing. Intellectual tasks are decomposed into their constituent components, and performance benchmarks are established under controlled conditions. This sets the baseline: a guarantee that the “intellectual machinery” meets functional and safety standards before entering a live workflow.

- Online evaluation — continuous monitoring once the system is operational — functions as the quality control layer. It detects asynchronies and bottlenecks as they emerge, enabling runtime adaptation and ensuring the system remains aligned with organizational objectives as they evolve.

EDD suggests to think about the metrics before stating the automation, and thus writing the monitoring dashboard before starting the introduction of AI Agents in a workflow. That is online eval. It moreover proposes to regularly test the whole system, end-to-end and component-wise, in front of a collection of use cases (i.e. datasets), with proper metrics. A collection of metrics and their associated datasets is known as Benchmarks in the ML community, it is important to statistically quantify if new versions or upgrades of components have a positive or negative influence on the end-to-end provided services.

Together, these two evaluation regimes replace ad hoc intuition with an instrumented feedback loop. The principle is simple but underappreciated: count to understand, understand to act.

Conclusion

The AI revolution, properly understood, is not about the sophistication of the underlying models. It is about the willingness and capacity of organizations to rethink legacy workflows, instrument their processes, and reconstruct intellectual production on an industrial basis.

Foundation models are necessary but not sufficient; they become a commodity. The firms and institutions that will capture disproportionate value from agentic AI are those that treat organizational redesign as a first-class engineering problem — and that build the measurement infrastructure required to manage cognitive work with the same rigor previously reserved for physical production.

The question is not whether an organization uses AI. It is whether this organization is ready to redesigned its workflows to convert the local efficiency of AI Agents to Return on Investment.